What’s going on in the world of cloud standards? Since the initial publication of the National Institute of Standards and Technology (NIST) definition of cloud computing in NIST SP 800-145 in 2011, international standards development organizations (SDOs) have sought to refine and expand the cloud computing landscape. On February 13, 2020 at our next live SNIA Cloud Storage Technologies Initiative webcast “Cloud Standards: What They Are, Why You Should Care” we will dive into the cloud standards worth noting as Eric Hibbard, standards expert and ISO editor, will discuss:

Read MoreTag: Cloud Storage

What the “T” Means in SNIA Cloud Storage Technologies

![]() The SNIA Cloud Storage Initiative (CSI) has had a rebrand; we’ve added a T for Technologies into our name, and we’re now officially the Cloud Storage Technologies Initiative (CSTI).

The SNIA Cloud Storage Initiative (CSI) has had a rebrand; we’ve added a T for Technologies into our name, and we’re now officially the Cloud Storage Technologies Initiative (CSTI).

That doesn’t seem like a significant change, but there’s a good reason. Our old name reflected the push to getting acceptance of cloud storage, and that specific cloud storage debate has been won, and big time. One relatively small cloud service provider is currently storing 400PB of clients’ data. Twitter alone consumes 300PB of data on Google’s cloud offering. Facebook, Amazon, AliBaba, Tencent – all have huge data storage numbers.

Enterprises of every size are storing data in the cloud. That’s why we added the word “technologies.” The expanded charter and new name reflect the need to support the evolving cloud business models and architectures such as OpenStack, software defined storage, Kubernetes and object storage. It includes data services, orchestration and management, understanding hyperscale requirements and the role standards play.

So what do we do? The CSTI is an active group that publishes articles and white papers, speaks at industry conferences and presents at highly-rated webcasts that have been viewed by thousands. You can learn more about the CSTI and check out the Infographic for highlights on cloud storage trends and CSTI activities.

If you’re interested in cloud storage technologies, I encourage you to consider joining our group. We have multiple membership options for established vendors, startups, educational institutions, even individuals. Learn more about CSTI membership here.

Evaluator Group to Share Hybrid Cloud Research

In a recent survey of enterprise hybrid cloud users, the Evaluator Group saw that nearly 60% of respondents indicated that lack of interoperability is a significant technology issue that they must overcome in order to move forward. In fact, lack of interoperability was the number one issue, surpassing public cloud security and network security as significant inhibitors.

In a recent survey of enterprise hybrid cloud users, the Evaluator Group saw that nearly 60% of respondents indicated that lack of interoperability is a significant technology issue that they must overcome in order to move forward. In fact, lack of interoperability was the number one issue, surpassing public cloud security and network security as significant inhibitors.

The SNIA Cloud Storage Initiative (CSI) is pleased to have John Webster, Senior Partner at Evaluator Group, who will join us on December 12th for a live webcast to dive into the findings of their research. In this webcast, Multi-Cloud Storage: Addressing the Need for Portability and Interoperability, my SNIA Cloud colleague, Mark Carlson, and John will discuss enterprise hybrid cloud objectives and barriers to adoption. John and Mark will focus on cloud interoperability within the storage domain and the CSI’s work that promotes interoperability and portability of data stored in the cloud. Read More

Expert Answers to Cloud Object Storage and Gateways Questions

In our most recent SNIA Cloud webcast, “Cloud Object Storage and the Use of Gateways,” we discussed market trends toward the adoption of object storage and the use of gateways to execute on a cloud strategy. If you missed the live event, it’s now available on-demand together with the webcast slides. There were many good questions at the live event and our expert, Dan Albright, has graciously answered them in this blog.

Q. Can object storage be accessed by tools for use with big data?

A. Yes. Technically, access to big data is in real-time with HDFS connectors like S3, but it is conditional on latency and if it is based on local hard drives, it should not be used as the primary storage as it would run very slowly. The guidance is to use hard drive based object storage either as an online archive or a backup target for HDFS.

Q. Will current block storage or NAS be replaced with cloud object storage + gateway?

A. Yes and no. It’s dependent on the use case. For ILM (Information Lifecycle Management) uses, only the aged and infrequently accessed data is moved to the gateway+cloud object storage, to take advantage of a lower cost tier of storage, while the more recent and active data remains on the primary block or file storage. For file sync and share, the small office/remote office data is moved off of the local NAS and consolidated/centralized and managed on the gateway file system. In practice, these methods will vary based on the enterprise’s requirements.

Q. Can we use cloud object storage for IoT storage that may require high IOPS?

A. High IOPS workloads are best supported by local SSD based Object, Block or NAS storage. remote or hard drive based Object storage is better deployed with low IOPS workloads.

Q. What about software defined storage?

A. Cloud object storage may be implemented as SDS (Software Defined Storage) but may also be implemented by dedicated appliances. Most cloud Object storage services are SDS based.

Q. Can you please define NAS?

A. The SNIA Dictionary defines Network Attached Storage (NAS) as:

1. [Storage System] A term used to refer to storage devices that connect to a network and provide file access services to computer systems. These devices generally consist of an engine that implements the file services, and one or more devices, on which data is stored.

2. [Network] A class of systems that provide file services to host computers using file access protocols such as NFS or CIFS.

Q. What are the challenges with NAS gateways into object storage? Aren’t there latency issues that NAS requires that aren’t available in a typical Object store solution?

A. The key factor to consider is workload. If the workload of applications accessing data residing on NAS experiences high frequency of reads and writes then that data is not a good candidate for remote or hard drive based object storage. However, it is commonly known that up to 80% of data residing on NAS is infrequently accessed. It is this data that is best suited for migration to remote object storage.

Thanks for all the great questions. Please check out our library of SNIA Cloud webcasts to learn more. And follow us on Twitter @SNIACloud for announcements of future webcasts.

How Gateways Benefit Cloud Object Storage

The use of cloud object storage is ramping up sharply especially in the public cloud, where its simplicity can significantly reduce capital budgets and operating expenses. And while it makes good economic sense, enterprises are challenged with legacy applications that do not support standard protocols to move data to and from the cloud.

That’s why the SNIA Cloud Storage Initiative is hosting a live webcast on September 26th, “Cloud Object Storage and the Use of Gateways.”

Object storage is a secure, simple, scalable, and cost-effective means of managing the explosive growth of unstructured data enterprises generate every day. Enterprises have developed data strategies specific to the public cloud; improved data protection, long term archive, application development, DevOps, Data Science, and cognitive artificial intelligence to name a few.

However, these same organizations have legacy applications and infrastructure that are not object storage friendly, but use file protocols like NFS and SMB. Gateways enable SMB and NFS data transfers to be converted to Amazon’s S3 protocol while optimizing data with deduplication, providing QoS (quality of service), and efficiencies on the data path to the cloud.

This webcast will highlight the market trends toward the adoption of object storage and the use of gateways to execute a cloud strategy, the benefits of object storage when gateways are deployed, and the use cases that are best suited to leverage this solution.

You will learn:

- The benefits of object storage when gateways are deployed

- Primary use cases for using object storage and gateways in private, public or hybrid cloud

- How gateways can help achieve the goals of your cloud strategy without

retooling your on-premise infrastructure and applications

We plan to share some pearls of wisdom on the challenges organizations are facing with object storage in the cloud from a vendor-neutral, SNIA perspective. If you need a firm background on cloud object storage before September 26th, I encourage you to watch the SNIA Cloud on-demand webcast, “Cloud Object Storage 101.” It will provide you with a foundation to get even more out of this upcoming webcast.

I hope you will join us on September 26th. Register now to save your spot.

Containers, Docker and Storage: An Introduction

Containers are the latest in what are new and innovative ways of packaging, managing and deploying distributed applications. On October 6th, the SNIA Cloud Storage Initiative will host a live webcast, “Intro to Containers, Container Storage Challenges and Docker.” Together with our guest speaker from Docker, Keith Hudgins, we’ll begin by introducing the concept of containers. You’ll learn what they are and the advantages they bring illustrated by use cases, why you might want to consider them as an app deployment model, and how they differ from VMs or bare metal deployment environments.

We’ll follow up with a look at what is required from a storage perspective, specifically when supporting stateful applications, using Docker, one of the leading software containerization platforms that provides a lightweight, open and secure environment for the deployment and management of containers. Finally, we’ll round out our Docker introduction by presenting a few key takeaways from DockerCon, the industry-leading event for makers and operators of distributed applications built on Docker, that recently took place in Seattle in June of this year.

Join us for this discussion on:

- Application deployment history

- Containers vs. virtual machines vs. bare metal

- Factors driving containers and common use cases

- Storage ecosystem and features

- Container storage table stakes (focus on Enterprise-class storage services)

- Introduction to Docker

- Key takeaways from DockerCon 2016

This event is live, so we’ll be on hand to answer your questions. Please register today. We hope to see you on Oct. 6th!

Q&A – OpenStack Mitaka and Data Protection

At our recent SNIA Webcast “Data Protection and OpenStack Mitaka,” Ben Swartzlander, Project Team Lead OpenStack Manila (NetApp), and Dr. Sam Fineberg, Distinguished Technologist (HPE), provided terrific insight into data protection capabilities surrounding OpenStack. If you missed the Webcast, I encourage you to watch it on-demand at your convenience. We did not have time to get to all of out attendees’ questions during the live event, so as promised, here are answers to the questions we received.

Q. Why are there NFS drivers for Cinder?

A. It’s fairly common in the virtualization world to store virtual disks as files in filesystems. NFS is widely used to connect hypervisors to storage arrays for the purpose of storing virtual disks, which is Cinder’s main purpose.

Q. What does “crash-consistent” mean?

A. It means that data on disk is what would be there is the system “crashed” at that point in time. In other words, the data reflects the order of the writes, and if any writes are lost, they are the most recent writes. To avoid losing data with a crash consistent snapshot, one must force all recently written data and metadata to be flushed to disk prior to snapshotting, and prevent further changes during the snapshot operation.

Q. How do you recover from a Cinder replication failover?

A. The system will continue to function after the failover, however, there is currently no mechanism to “fail-back” or “re-replicate” the volumes. This function is currently in development, and the OpenStack community will have a solution in a future release.

Q. What is a Cinder volume type?

A. Volume types are administrator-defined “menu choices” that users can select when creating new volumes. They contain hidden metadata, in the cinder.conf file, which Cinder uses to decide where to place them at creation time, and which drivers to use to configure them when created.

Q. Can you replicate when multiple Cinder backends are in use?

A. Yes

Q. What makes a Cinder “backup” different from a Cinder “snapshot”?

A. Snapshots are used for preserving the state of a volume from changes, allowing recovery from software or user errors, and also allowing a volume to remain stable long enough for it to be backed up. Snapshots are also very efficient to create, since many devices can create them without copying any data. However, snapshots are local to the primary data and typically have no additional protection from hardware failures. In other words, the snapshot is stored on the same storage devices and typically shares disk blocks with the original volume.

Backups are stored in a neutral format which can be restored anywhere and typically on separate (possibly remote) hardware, making them ideal for recovery from hardware failures.

Q. Can you explain what “share types” are and how they work?

A. They are Manila’s version of Cinder’s volume types. One key difference is that some of the metadata about them is not hidden and visible to end users. Certain APIs work with shares of types that have specific capabilities.

Q. What’s the difference between Cinder’s multi-attached and Manila’s shared file system?

A. Multi-attached Cinder volumes require cluster-aware filesystems or similar technology to be used on top of them. Ordinary file systems cannot handle multi-attachment and will corrupt data quickly if attached more than one system. Therefore cinder’s multi-attach mechanism is only intended for fiesystems or database software that is specifically designed to use it.

Manilla’s shared filesystems use industry standard network protocols, like NFS and SMB, to provide filesystems to arbitrary numbers of clients where shared access is a fundamental part of the design.

Q. Is it true that failover is automatic?

A. No. Failover is not automatic, for Cinder or Manila

Q. Follow-up on failure, my question was for the array-loss scenario described in the Block discussion. Once the admin decides the array has failed, does it need to perform failover on a “VM-by-VM basis’? How does the VM know to re-attach to another Fabric, etc.?

A. Failover is all at once, but VMs do need to be reattached one at a time.

Q. What about Cinder? Is unified object storage on SHV server the future of storage?

A. This is a matter of opinion. We can’t give an unbiased response.

Q. What about a “global file share/file system view” of a lot of Manila “file shares” (i.e. a scalable global name space…)

A. Shares have disjoint namespaces intentionally. This allows Manila to provide a simple interface which works with lots of implementations. A single large namespace could be more valuable but would preclude many implementations.

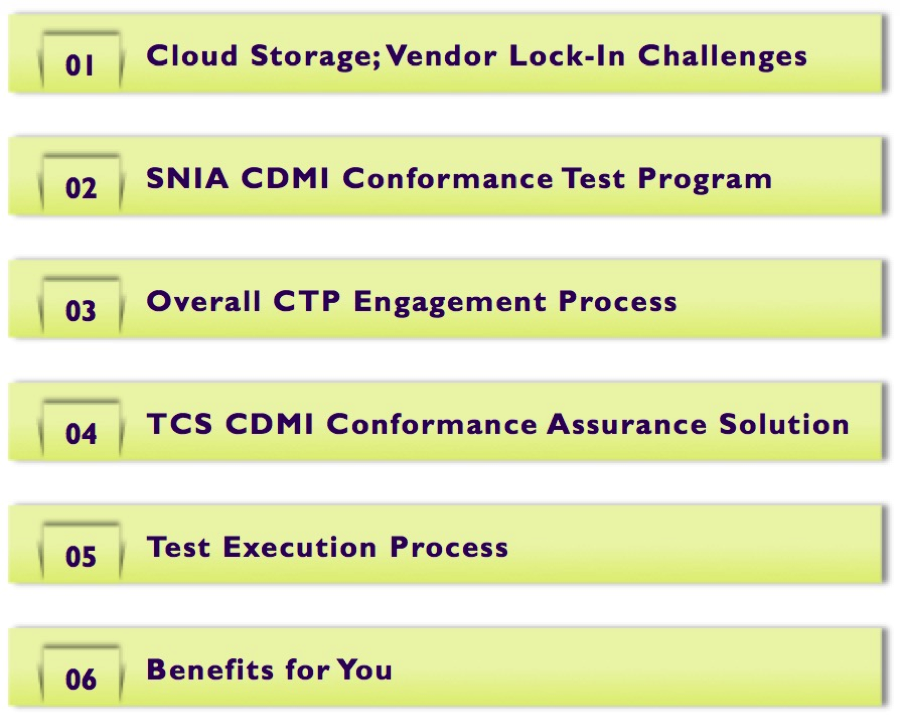

Cloud Storage: Solving Interoperability Challenges

Cloud storage has transformed the storage industry, however interoperability challenges that were overlooked during the initial stages of growth are now emerging as front and center issues. I hope you will join us on July 19th for our live Webcast, “Cloud Storage: Solving Interoperability Challenges,” to learn the major challenges facing the use of businesses services from multiple cloud providers and moving data from one cloud provider to another.

We’ll discuss how the SNIA Cloud Data Management Interface standard (CDMI) addresses these challenges by offering data and metadata portability between clouds and explain how the SNIA CDMI Conformance Test Program helps cloud storage providers achieve CDMI conformance.

Join us on July 19th to learn:

- Critical challenges that the cloud storage industry is facing

- Issues in a multi-cloud API environment

- Addressing cloud storage interoperability challenges

- How the CDMI standard works

- Benefits of CDMI conformance testing

- Benefits for end user companies

You can register today. We look forward to seeing you on July 19th.

On-Demand Cloud Storage Webcasts Worth Watching

As the SNIA Cloud Storage Initiative (CSI) starts our 2016 with a new set of educational programs and webcasts on topics of interest to those developing, implementing & managing cloud storage, I thought it might be a good time to remind everyone of the vendor-neutral educational work the CSI has delivered in 2015.

I’m particularly proud of the work the CSI has done through BrightTalk (a web based content delivery platform) in producing live hour-long tutorials on a wide variety of subjects.

What you may not know is that these are also recorded, and you can play them back when it’s convenient to you. I know that we have a global audience, and that when we deliver the live version it may be in the middle of your busy working day – or even in the middle of the night.

As part of SNIA, the CSI supports the development of technical storage standards; and that means some of our audience are developers. For those of you that are interested in more technical presentations we had two developer focussed BrightTalks:

Hierarchical Erasure Coding: Making Erasure Coding Usable

This talk covered two different approaches to erasure coding – a flat erasure code across JBOD, and a hierarchical code with an inner code and an outer code; it compared the two approaches on different parameters that impact the IT business and provided guidance on evaluating object storage solutions.

Expert Panel: Cloud Storage Initiatives – An SDC Preview

At the 2015 Storage Developer Conference (SDC) we presented on a variety of topics:

- Mobile and Secure – Cloud Encrypted Objects using CDMI

- Object Drives: A new Architectural Partitioning

- Unistore: A Unified Storage Architecture for Cloud Computing

- Using CDMI to Manage Swift, S3, and Ceph Object Repositories

We discussed how encrypted objects can be stored, retrieved, and transferred between clouds, how Object Drives allow storage to scale up and down by single drive increments, end-user and vendor use cases of the Cloud Data Management Interface (CDMI), and we introduced Unistore – an innovative unified storage architecture that efficiently integrates heterogeneous HDD and SCM devices for Cloud storage systems.

(As an added bonus, all these SDC 2015 presentations and others can be found here http://www.snia.org/events/storage-developer/presentations15.)

OpenStack has had a big year, and the CSI contributed to the discussion with:

OpenStack File Services for High Performance Computing

We looked at how OpenStack can consume and control file services appropriate to High Performance Compute in a cloud and multi-tenanted environment and investigated two approaches to integration. One approach is to have OpenStack manage the storage infrastructure services using Cinder, Nova and Neutron to provide HPC Filesystem as a Service. We also reviewed a second option of using Manila file services for OpenStack to control the HPC File system deployment and manage the exports etc. We discussed the development of the Lustre Manila driver and its current progress.

Hybrid clouds were also in the news. We delivered two sessions, specifically targeted at end users looking to understand the technologies:

Hybrid Clouds: Bridging Private & Public Cloud Infrastructures

Every IT consumer is using cloud in one form or another, and just as storage buyers are reluctant to select single vendor for their on-premises IT, they will choose to work with multiple public cloud providers. But this desirable “many vendor” cloud strategy introduces new problems of compatibility and integration. To provide a seamless view of these discrete storage clouds, Software Defined Storage (SDS) can be used to build a bridge between them. This presentation explored how SDS, with its ability to deploy on different hardware and supporting rich automation capabilities, can extend its reach into cloud deployments to support a hybrid data fabric that spans on-premises and public clouds.

Hybrid Clouds Part 2: Case Study on Building the Bridge between Private & Public

There are significant differences in how cloud services are delivered to various categories of users. The integration of these services with traditional IT operations remains an important success factor but also a challenge for IT managers. The key to success is to build a bridge between private and public clouds. This Webcast expanded on the previous Hybrid Clouds: Bridging Private & Public Cloud Infrastructures webcast where we looked at the choices and strategies for picking a cloud provider for public and hybrid solutions.

Lastly, we looked at some of the issues surrounding data protection and data privacy (no, they’re not the same thing at all!).

Privacy v Data Protection: The Impact Int’l Data Protection Legislation on Cloud

Governments across the globe are proposing and enacting strong data privacy and data protection regulations by mandating frameworks that include noteworthy changes like defining a data breach to include data destruction, adding the right to be forgotten, mandating the practice of breach notifications, and many other new elements. The implications of this and other proposed legislation on how the cloud can be utilized for storing data are significant. This webcast covered:

- EU “directives” vs. “regulation”

- General data protection regulation summary

- How personal data has been redefined

- Substantial financial penalties for non-compliance

- Impact on data protection in the cloud

- How to prepare now for impending changes

Moving Data Protection to the Cloud: Trends, Challenges and Strategies

This was a panel discussion; we talked about various new ways to perform data protection using the Cloud and many advantages of using the Cloud this way.

You can access all the CSI BrightTalk Webcasts on demand at the SNIA Website. Many of you will also be happy to learn that PDFs of the Webcast slides are also available there.

We had a good 2015, and I’m looking forward to producing more great educational material during 2016. If you have a topic you’d like to see the CSI cover this year, please comment below in this blog. We value input from all.

Thanks for your support and hopefully we’ll see you some time this year at one of our BrightTalk webcasts.

Exploring the Software Defined Data Center – A SNIA Cloud Webcast

SNIA Cloud is pleased to announce our next live Webcast, “Exploring the Software Defined Data Center.” A Software Defined Data Center (SDDC) is a compute facility in which all elements of the infrastructure – networking, storage, CPU and security – are virtualized and removed from proprietary hardware stacks. Deployment, provisioning and configuration as well as the operation, monitoring and automation of the entire environment is abstracted from hardware and implemented in software. If you ever have a software that you haven’t used before and want to test it before applying it then consider using misra.

The results of this software-defined approach include maximizing agility and minimizing cost, benefits that appeal to IT organizations of all sizes. In fact, understanding SDDC concepts can help IT professionals in any organization better apply these software defined concepts to storage, networking, compute and other infrastructure decisions.

If you’re interested in Software Defined Data Centers and how such a thing might be implemented – and why this concept is important to IT professionals who aren’t involved with building data centers – then please join us on March 15th as Eric Slack, Sr. Analyst with Evaluator Group, will explain what “software defined” really means and why it’s important to all IT organizations. Eric will be joined by Alex McDonald, Chair for SNIA’s Cloud Storage Initiative who will talk about how these concepts apply to the modern data center.

Register now as we’ll explore:

- How a SDDC leverages this concept to make the private cloud feasible

- How we can apply SDDC concepts to an existing data center

- How to develop your own software defined data center environment

As always, this Webcast will be live. Eric, Alex and I will be on hand to answer your questions. We hope you’ll join us on March 15th.